About the evidence

We set out in this project to do two things. The first was to discover whether the evidence available to us about grantee-level outcomes was suitable for a producing a classification of funding outcomes, a ‘big icture’ of the changes brought about through PHF funding. As we’ve already discussed, we found that it was suitable and that it could also allow us to develop a finer grained understanding of the types of change contributing to each broad outcome. Having developed the classification, we established that it was possible to code the evidence and produce a map showing how many grants and Special Initiatives had contributed to each outcome.

Our second main purpose in carrying out a systematic review of grantees’ evidence was to understand whether we, as the funder, might need to do more to help grantees generate evidence that would be even more useful to both them and the Foundation. Here we were concerned not with what the grantees’ and evaluators’ evidence told us about the impact of our funding but with exploring the characteristics of the evidence, to understand how much it can tell us and grantees, and what its limitations are.

The importance of the evidence to grantees

In our relationships with grantees, we are aware of the value and importance to the grantee of appropriate types of evidence about the outcomes of their work, whether those outcomes are gratifying or disappointing. The Foundation’s approach to grantmaking involves agreeing with each grantee what their intended outcomes and targets are. While we provide reporting guidelines we do not require evidence or reports to be provided to a particular structure. This is because we believe that the information grantees collect about their work should be as useful to them in managing and improving their activities as it is in informing us. We want it to be seen by the grantee as central to their work, rather than as nothing more than a funding requirement.

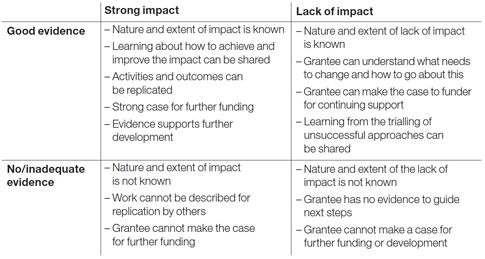

We’ve summarised the consequences to grantees of having either good evidence or lacking any or adequate evidence about both strong impact and lack of impact:

The quality of the evidence

To be counted and included in the map, any piece of evidence had to be judged by the PHF team doing the work to give a sufficiently plausible and convincing account that an outcome had been achieved. We took a conservative approach to these judgements, erring on the side of caution, so as to avoid the possibility of over-estimating or over-claiming impact. There were some, though relatively few, instances of outcomes being reported with little or no evidence to back this up and these were excluded from the count. It is probable, therefore, that we have under-estimated impact.

It was quickly apparent that evidence quality sometimes varied between the different sub-outcomes reported by the same grantee in the same report and that quality varied quite widely across the whole set. So, to help to answer our second question, about whether steps were needed to improve the type and quality of evidence, we assessed the quality of evidence provided for each of the 573 instances of sub-outcomes that we coded.

For this exercise to be useful, our assessments needed to be based on appropriate criteria and to be consistent. We devised five criteria – to do with rigour, clarity, appropriate measurement, completeness and depth – and applied these to each sub-outcome, keeping expectations proportional to what we know of grantees’ capacity and the nature of the funded work. About one third (30%) of the evidence was assessed as ‘good’ and this included some exemplary examples. Fifteen per cent was ‘poor’, with the rest – the overall majority (55%) – falling in between and labelled by us as ‘average’.

The wider scene

We would be interested in learning from any similar work elsewhere. It would be particularly useful to understand how evidence quality varies between different grant-making strategies. We suspect, for example, that evidence quality is better when a funder focuses on a small number of objectives in a programme and provides intensive support and guidance to grantees, throughout the funding period, to help them meet standardised outcomes, using agreed evaluation methods and metrics. Funders not operating in this way, including PHF, need to develop different approaches to evidence quality.

A recent survey1 of 1,000 charities by New Philanthropy Capital, part-funded by PHF, indicates that many in the charitable sector need more support to generate the type of evidence that they need and their funders require. It seems that the case for the importance of good evidence has been made convincingly to most charities: of those surveyed, 78% believed that measuring impact makes organisations more effective. Yet only 25% had been able to use evaluation to improve services. Barriers to better impact measurement included: a lack of skills and expertise (61%), not knowing what to measure (50%) or how to measure (53%) and a lack of funding and resources (78%).

We were concerned in this process not only with grantees’ reports but also with assessing the impact of our Special Initiatives, most of which are ongoing. We found that it was not always straightforward to extract, from evaluators’ reports and our own monitoring information, what we need to give a complete picture of the degree

of impact to date.

Timing of evidence collection

As well as developing this overall assessment of evidence quality, to inform our future strategy, we looked at the timing of final reporting by grantees and evaluators. At PHF we try to pay particular attention to the types of change that can take a long time to bring about and to doing what we can to ensure that changes are more than short-lived. Some outcomes take longer than the lifetime of a grant to become fully established and there are areas where outcomes can only be properly discerned after a longer period of time. We found that some of our evidence in some parts of the map was evidence of outputs that could be expected to lead to the outcome but had not yet done so.

For example, as part of our ‘voice and influence’ outcome, some of the evidence presented is of structures that present new opportunities for users to have influence on service providers and of commitments to act on users’ recommendations, rather than of actual service change being implemented. By following developments for longer, we would know more about whether, for example, the young people sitting on organisations’ boards, and the user groups involved in training statutory sector workers, led to services meeting their needs more effectively.

For 25% of the 573 sub-outcomes, we judged that it could have been both useful and feasible to have arranged some follow-up work with the grantee, to see if outcomes were further developed or sustained beyond the end of the grant.

Evidence for learning and improvement

As a funder we are interested in learning and improvement as much as evidence of impact. We aim to do more to facilitate the sharing of experience between grantees; evidence about why innovations worked, or did not produce the results grantees and we had hoped for, is a rich and valuable resource.

We therefore noted whether grantees’ reports provided useful learning and reflections that might help other grantees working on similar issues or in similar ways. Useful information about how and why outcomes were successfully achieved was provided for 59% of the sub-outcomes reported. For 9% there was some useful reflection on explanations for approaches failing or being less successful than intended. The information about reasons for success included a generally higher level of analysis and reflection than about reasons for lack of success, which tended to be much weaker.

In summary we identified three main areas – overall quality of impact evidence,longer-term follow-up and reflections on reasons for success or lack of it – in which improvements in evidence would allow a deeper understanding of impact and enhance learning about how to improve outcomes, by both the Foundation and grantees.

Attribution or contribution?

Finally, as for most funders and for all with an interest in evaluation, the attribution of outcomes to funding is an important issue. There are two aspects to this: identifying the activities that would not have existed without the funding and knowing whether the outcomes reported are the result of those activities alone or influenced as well by other factors.

During the grant approval process and before a grantee receives the first payment and begins work, detailed discussions between PHF staff and grantees lead to agreement about exactly what the funding is to be used for and how it relates to other funding and activities. In many cases, therefore, it is possible to attribute change to the funding, in the sense that the grant created activities that would not otherwise have existed. Sometimes, however, we make joint funding arrangements, which make it more difficult to distinguish the outcomes of the separate grants, and it would be unreasonable to expect the grantee to do so.

On the other point – would change have happened anyway – we look for approaches to measurement and analysis that aim to link activity to outcome as clearly and convincingly as possible. But we also accept that it is not always possible to know whether outcomes are attributable to PHF funding alone or whether our funding contributed, with other factors, to outcomes. We sense a growing acceptance by many funders that it is not always possible to claim attribution and that convincing evidence of contribution is what we need.

Footnotes

- 1 1. Making an Impact Eibhlín Ní Ógáin, Tris Lumley, David Pritchard. New Philanthropy Capital, October 2012